Section 4: Executing Large Models on Vista with Tapis and Flexserv

Stage 4.1: Running FlexServ on Vista with TAPIS

Step 4.1.1: Adding TMS Credentials on the Vista system.

If you have set up TMS credentials in our previous hands-on session, you are good to go and can skip this step. If you haven’t set up TMS credentials yet, please follow the instructions below to add TMS credentials for the Vista system.

To access the public system running on Vista, first you will need to add TMS credentials on the system. TMS (Trust Management System) credentials on Tapis systems are temporary credentials generated by the TMS System and stored in the Tapis Security Kernel (SK) that allow services or applications to securely access external resources on behalf of a user. Instead of storing permanent usernames or passwords, Tapis retrieves the required credentials from the TMS service at runtime. This approach improves security by keeping sensitive information encrypted and centrally managed while enabling automated job execution on Tapis systems.

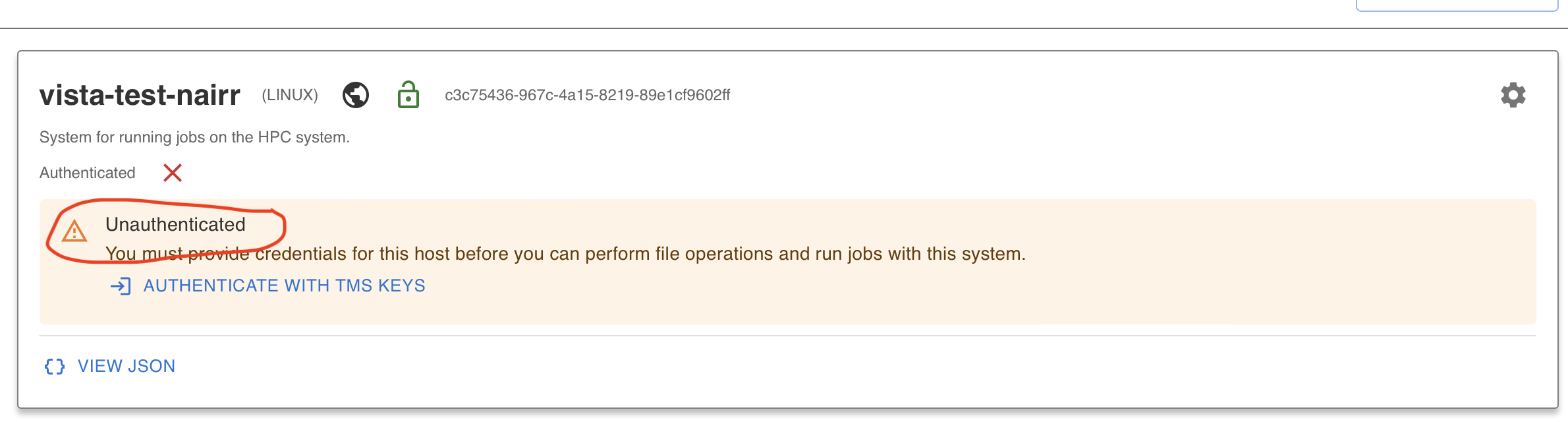

When you log in to Tapis UI and click on Systems, you should see one public system you have access to. This system has been pre-registered for you. But you are not authenticated yet to access files on it.

Click on Authenticate with TMS Keys and that should add your credentials.

For detailed instructions on how to add TMS credentials, please refer to this

tutorial here

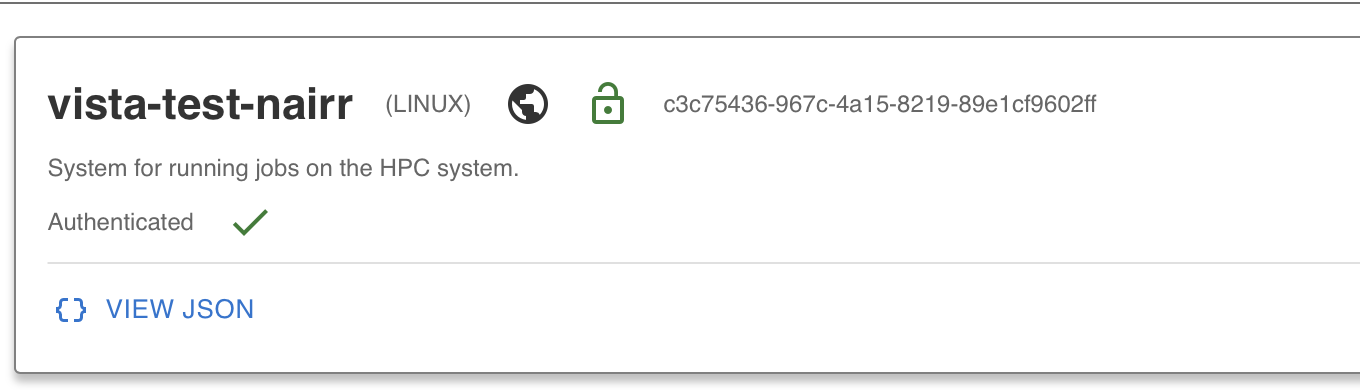

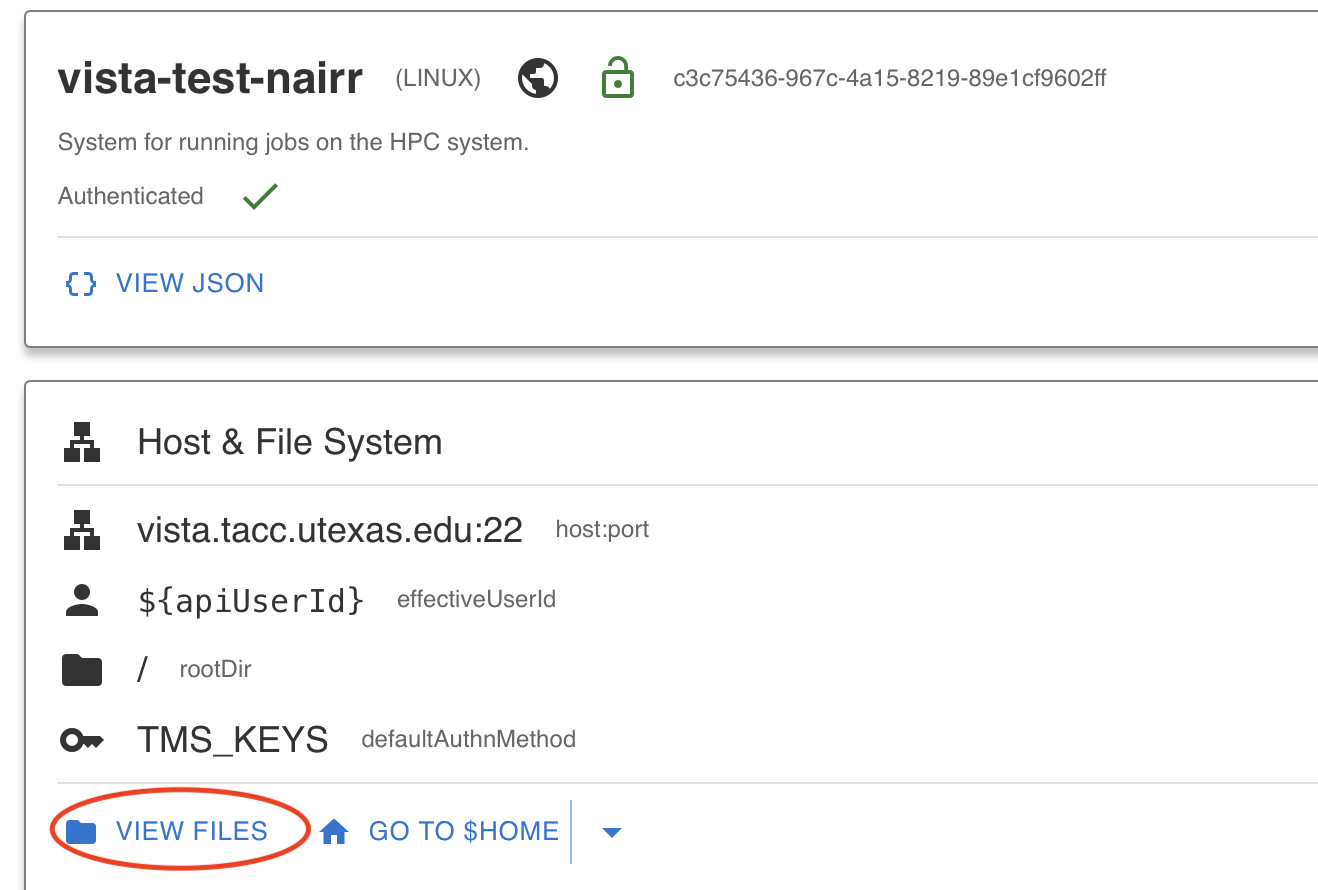

After completing the authentication, you can now view files on Vista by clicking on the View Files button. If you can view the files, that means you have successfully added your TMS credentials and you are authenticated to access the Vista system.

Step 4.1.2: Running FlexServ Application on Vista

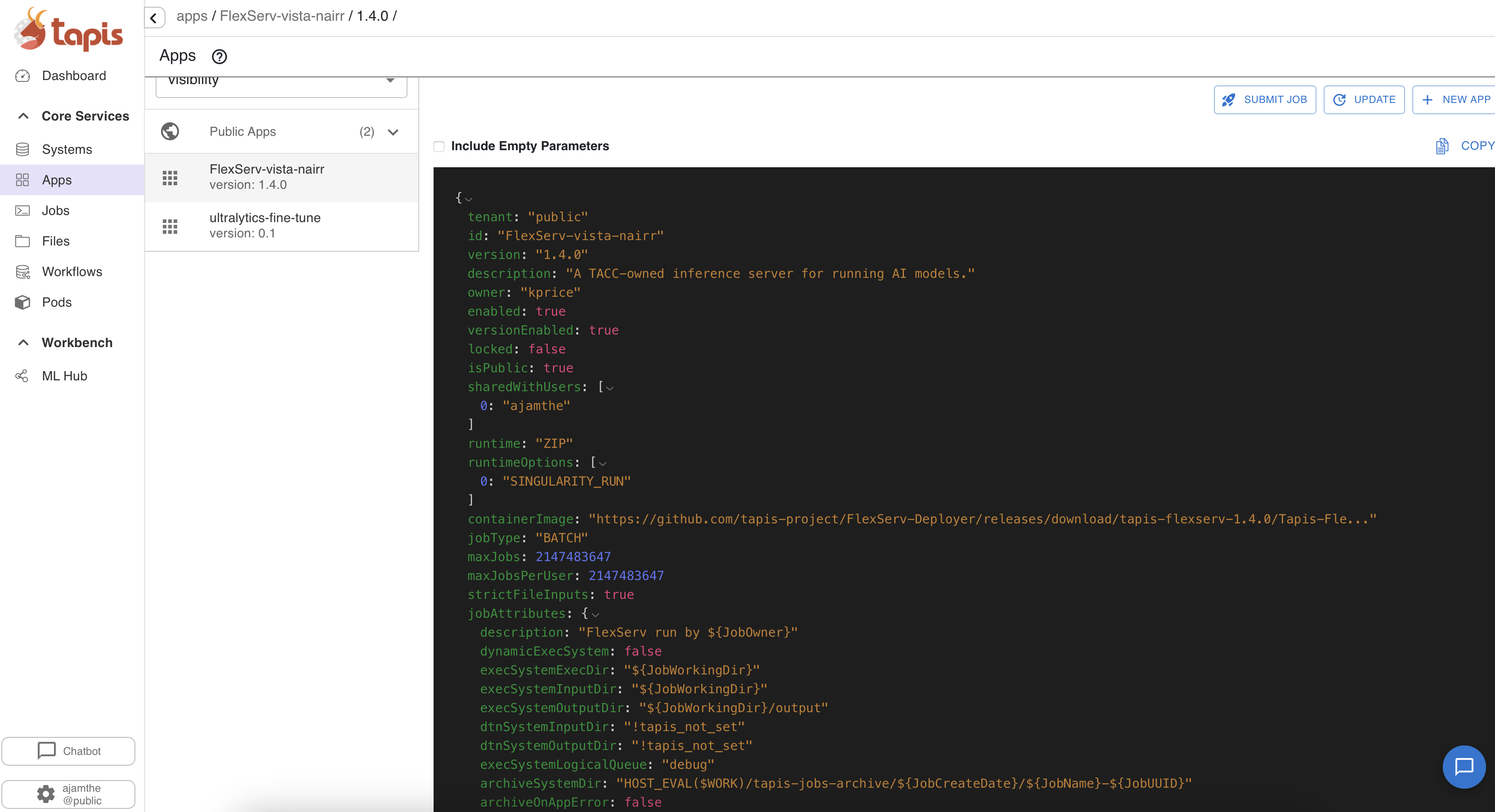

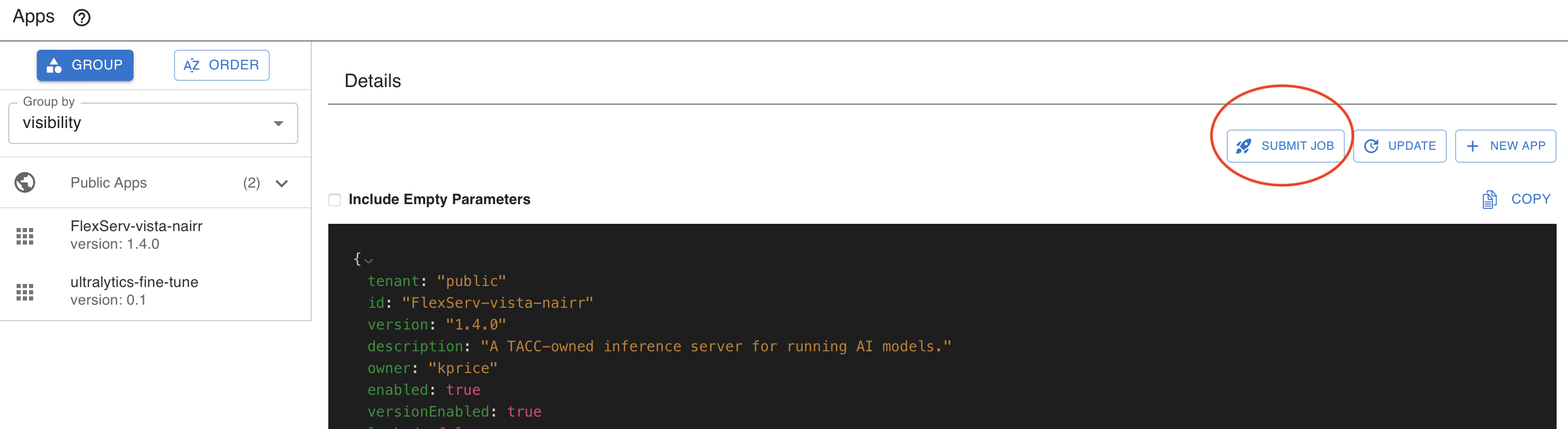

In the Tapis UI, you should navigate to Apps and you should see the Flex Server application already registered in your Tapis UI: FlexServ-vista-nairr version 1.4.0

Step 4.1.3: Submit FlexServ Job using TAPIS UI

1. Initiate Submission

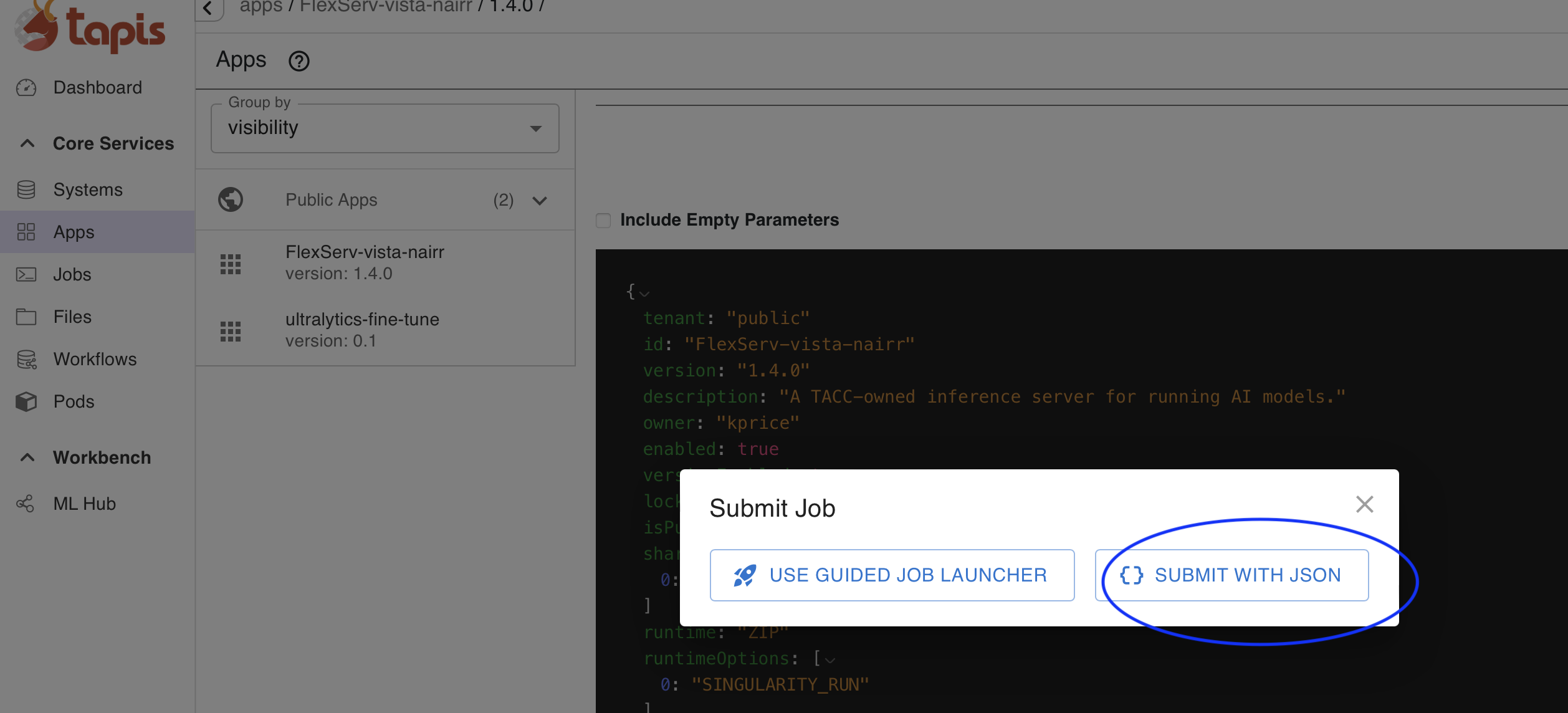

In the Tapis UI, navigate to the application FlexServ-vista-nairr, click the Submit Job button, and select SUBMIT WITH JSON.

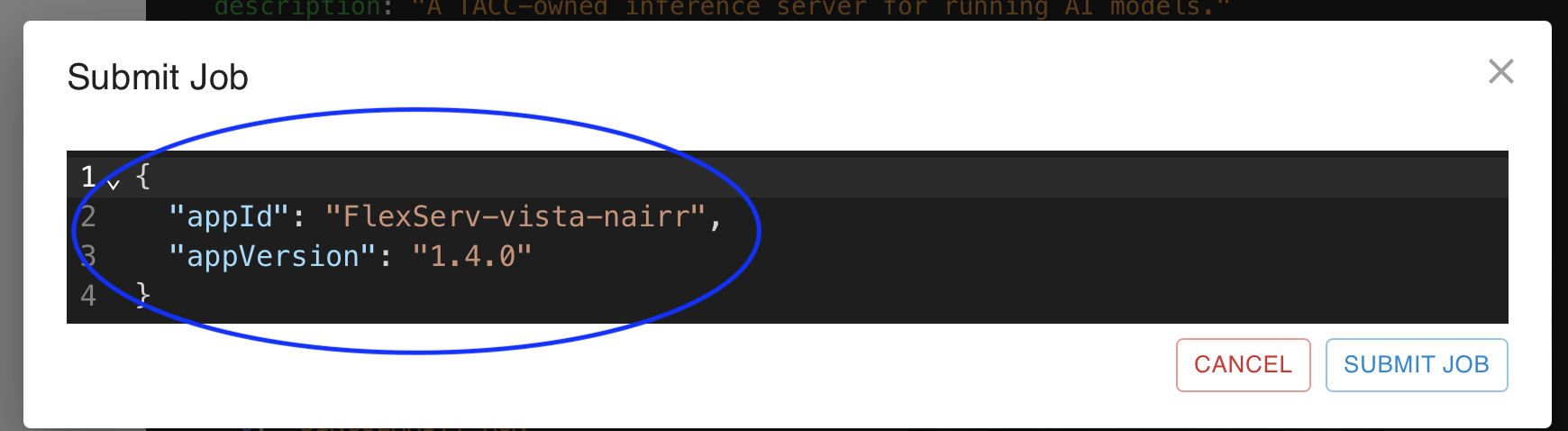

2. Configure the Payload

Replace the default JSON in the editor with your job definition. Copy the json below in the editor.

{

"name": "tap_flexserv_vista_test",

"appId": "FlexServ-vista-nairr",

"appVersion": "1.4.0",

"execSystemId": "vista-test-nairr",

"tenant": "public",

"execSystemLogicalQueue": "gh",

"maxMinutes": 60,

"parameterSet": {

"appArgs": [

{

"arg": "--flexserv-port 8000",

"name": "flexServPort"

},

{

"arg": "--model-name Qwen/Qwen3.5-0.8B",

"name": "modelName"

},

{

"arg": "--enable-https",

"name": "enableHttps"

},

{

"arg": "--device auto",

"name": "device"

},

{

"arg": "--dtype bfloat16",

"name": "dtype"

},

{

"arg": "--attn-implementation sdpa",

"name": "attnImplementation"

},

{

"arg": "--model-timeout 86400",

"name": "modelTimeout"

},

{

"arg": "--quantization none",

"name": "quantization"

},

{

"arg": "--is-distributed 0",

"name": "isDistributed"

}

],

"envVariables": [

{

"key": "PRI_MODEL_HOST",

"value": "HOST_EVAL($SCRATCH)/flexserv/models"

},

{

"key": "PUB_MODEL_HOST",

"value": "/work/projects/aci/cic/apps/flexserv/models"

},

{

"key": "APPLY_PATCH",

"value": "1"

},

{

"key": "APPTAINER_IMAGE",

"value": "/work/projects/aci/cic/apps/flexserv/zhangwei217245--flexserv-transformers--1.4.1.sif"

}

],

"schedulerOptions": [

{

"arg": "--tapis-profile tacc-apptainer",

"name": "TACC Scheduler Profile"

},

{

"name": "Reservation Name",

"arg": "--reservation GHTapis+Nairr"

},

{

"name": "TACC Resource Allocation",

"description": "The TACC Allocation associated with this job execution",

"include": true,

"arg": "-A TRA24006"

}

]

}

}

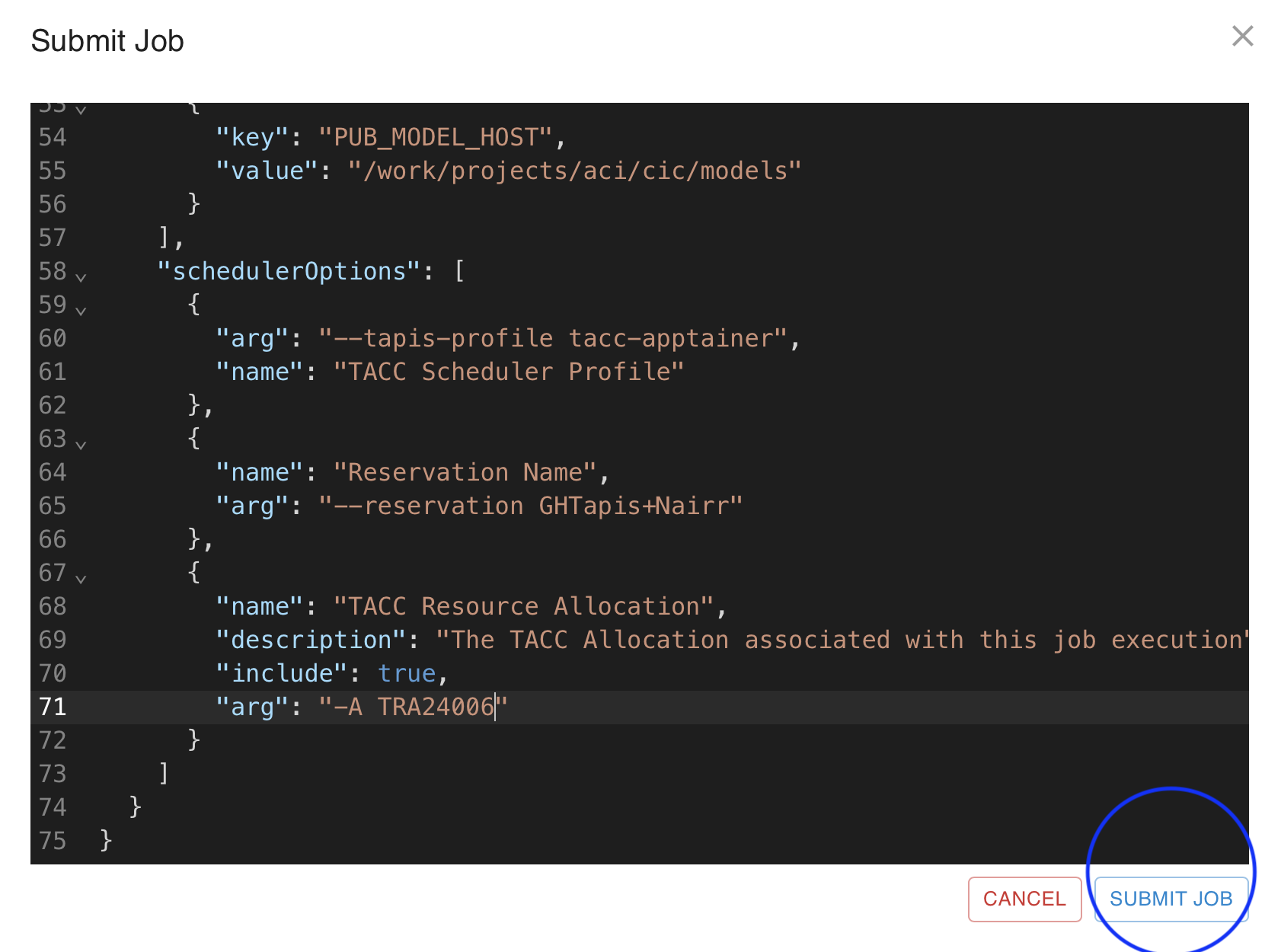

3. Submit Job

Once the job definition is pasted click on Submit job

4. View Job

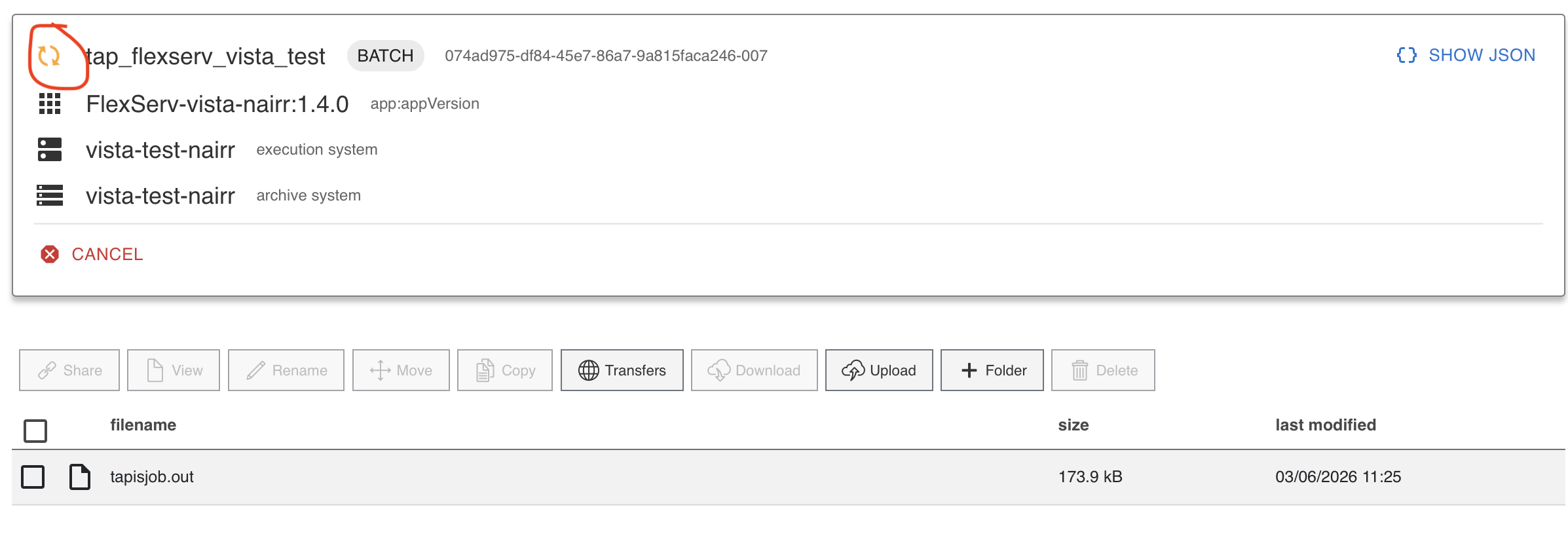

Once the job is submitted successfully, you should go to the left panel and in core services click on the Jobs tab. You can see an active job. Job status will change and when your job enters running state, that’s when the server will be up and running.

5. View Job Output file to get the Flex server port and Token

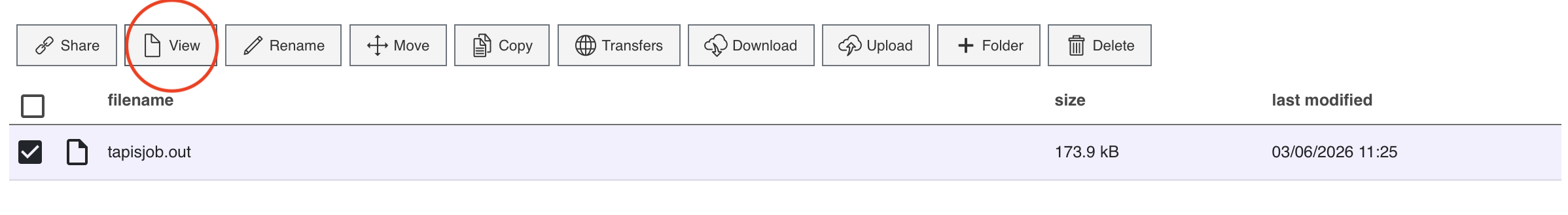

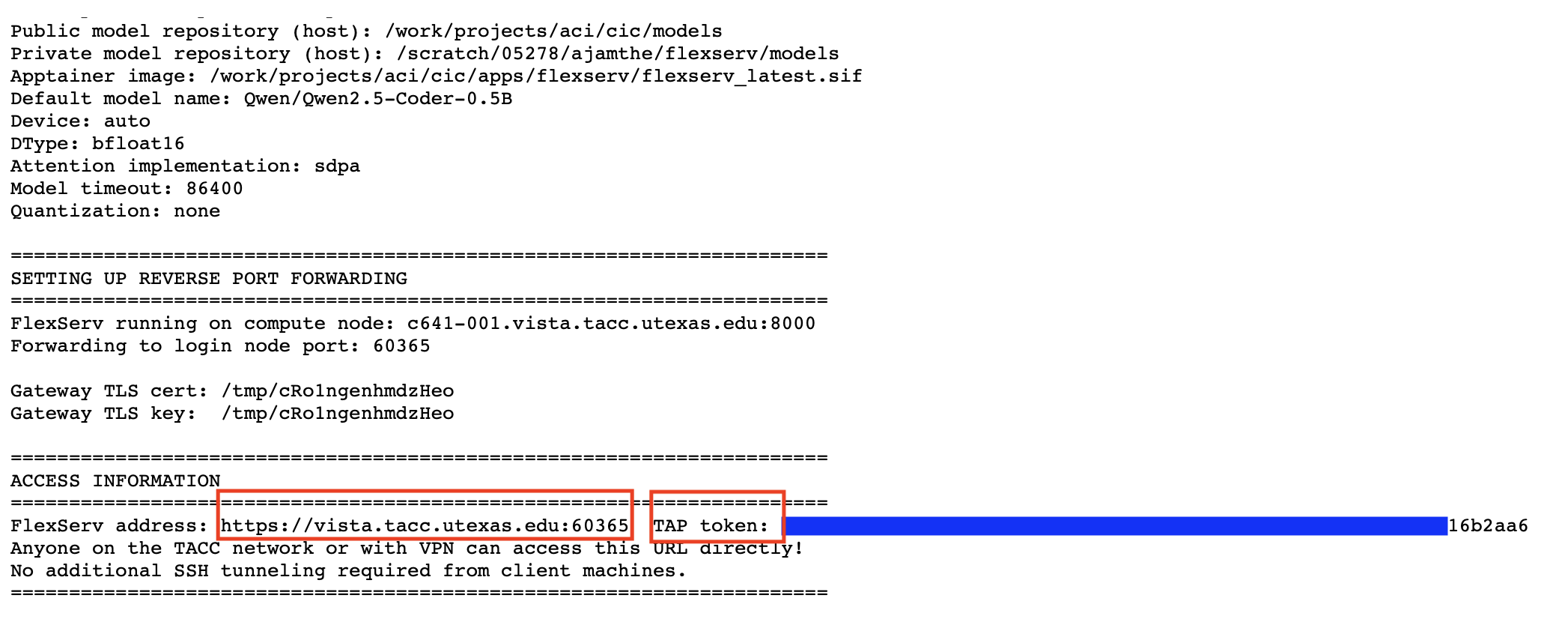

Step 5a) Click on tapisjob.out file and then click view. This should open the file for viewing

Step 5b) Once the tapisjob.out opens, look at the ACCESS INFORMATION Section to grab the url for your flex server with port number and also the TAP token. Save it to your notepad.

Stage 4.2: Play with FlexServ

Congratulations! Now you are successfully running FlexServ, you can explore the FlexServ UI next and try to send your first chat.

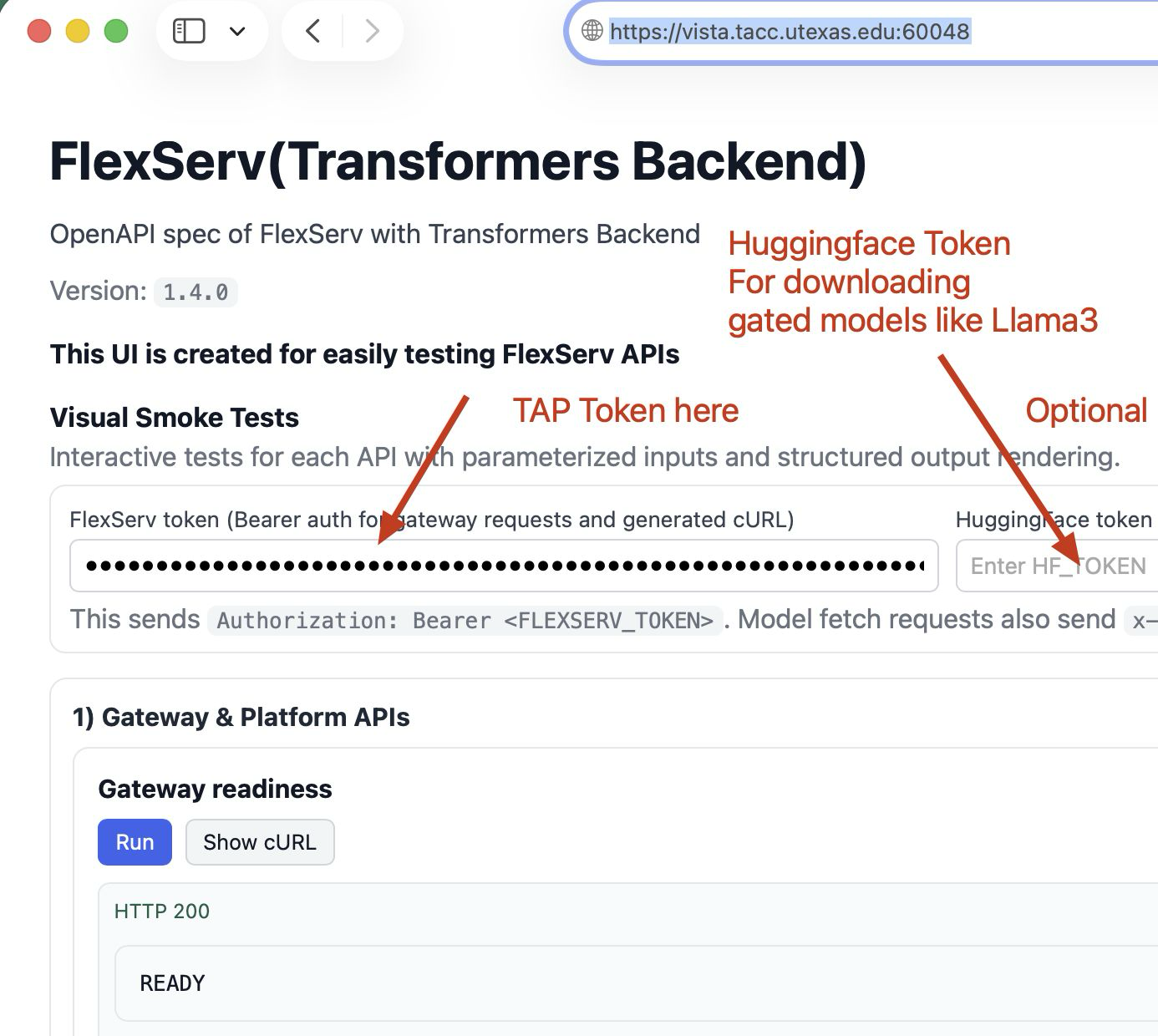

Go to the URL htps://vista.tacc.utexas.edu:Port number from above and enter the TAP token from the tapisjob.out as shown in figure below.

Step 4.2.1: Meet FlexServ Resource Monitor

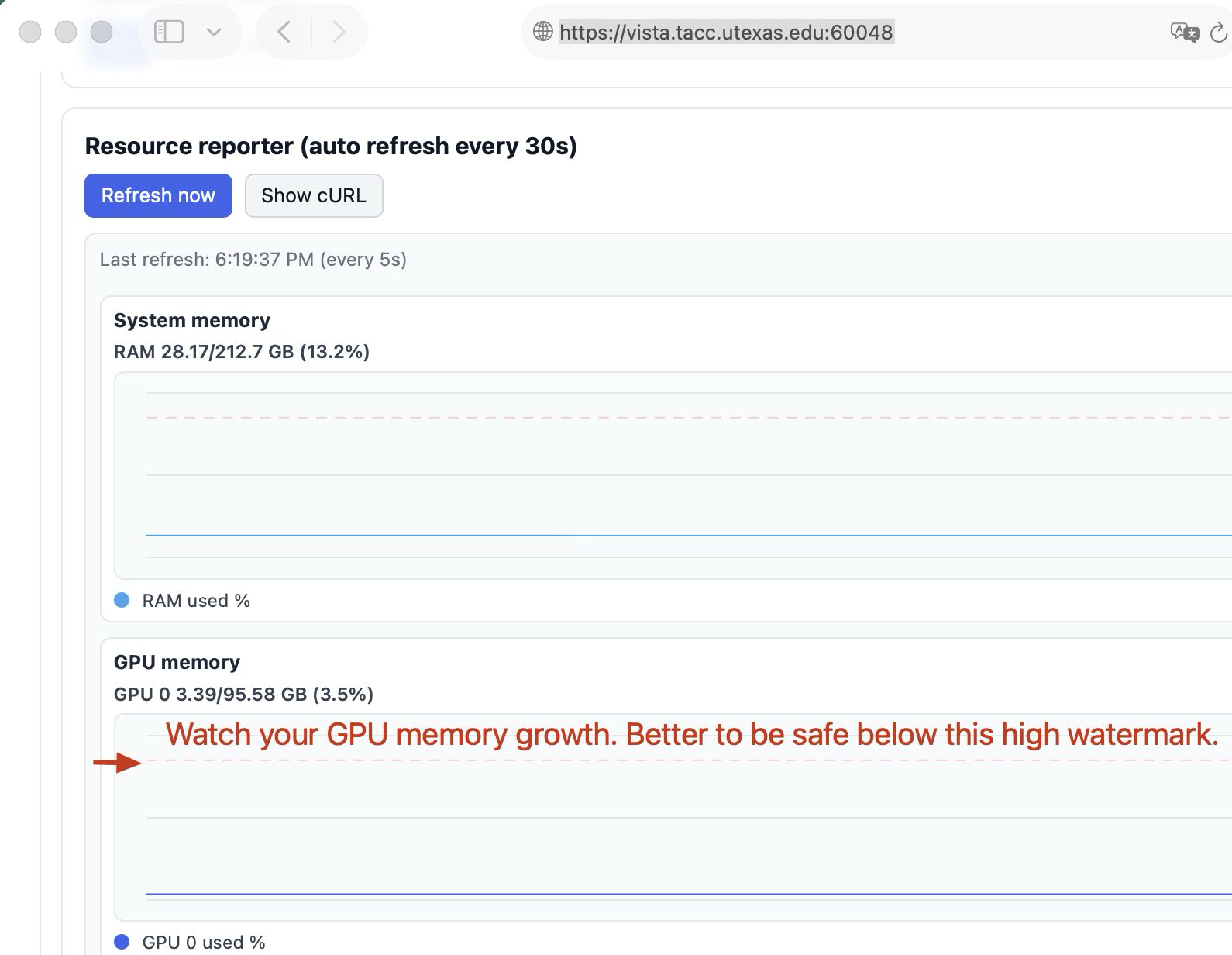

The FlexServ Resource Reporter provides a visualization of the current resource usage of your FlexServ server, including GPU, CPU, and memory utilization. This can help you monitor the performance of your models and optimize resource allocation for better efficiency. You can access the Resource Reporter from the FlexServ UI.

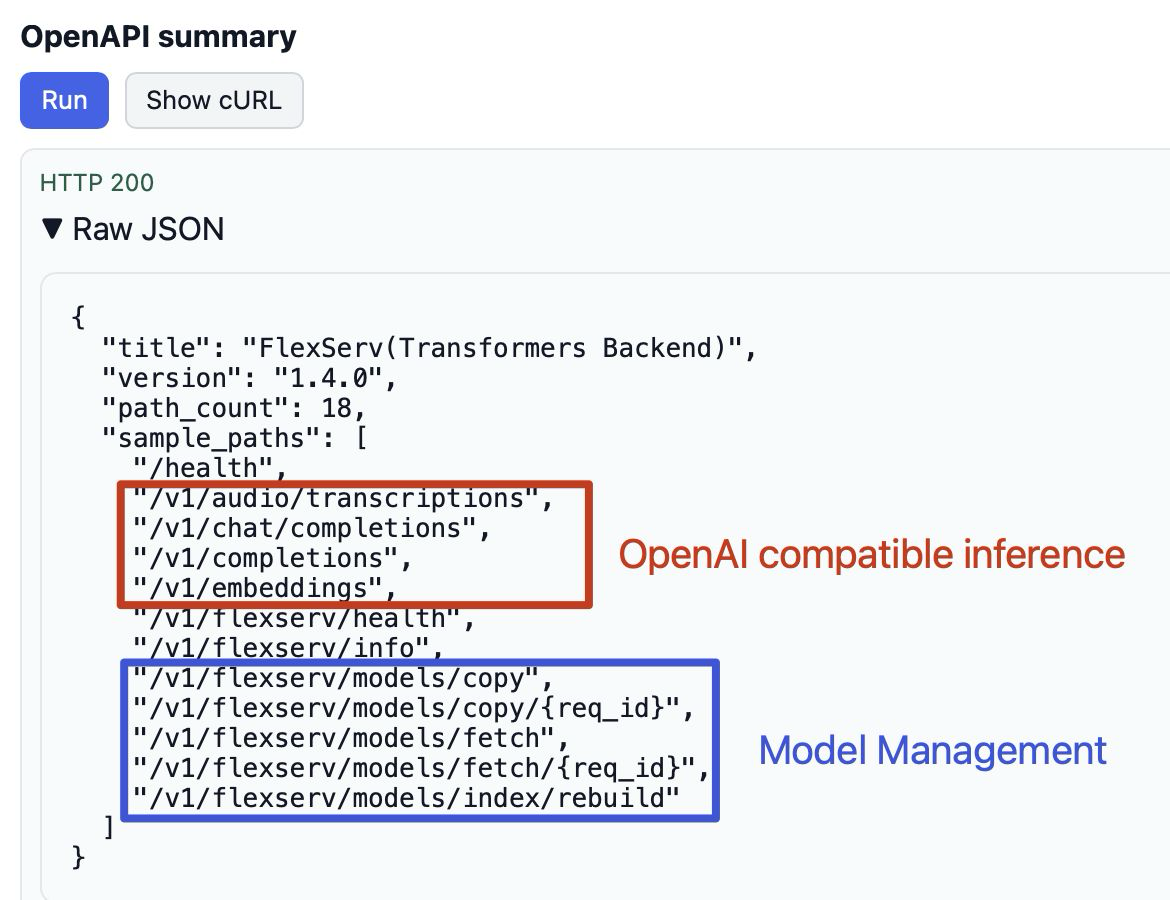

Step 4.2.2: View FlexServ RESTful API Summary

The FlexServ RESTful APIs allow you to interact with the FlexServ server programmatically. You can use the OpenAI-compatible APIs to perform various operations such as sending chat messages, generating text, creating embeddings, and more. The model management APIs The APIs are designed for your to manage your models local to your FlexServ service. You can visit http(s)://your-flexserv-url/redoc to see the API documentation.

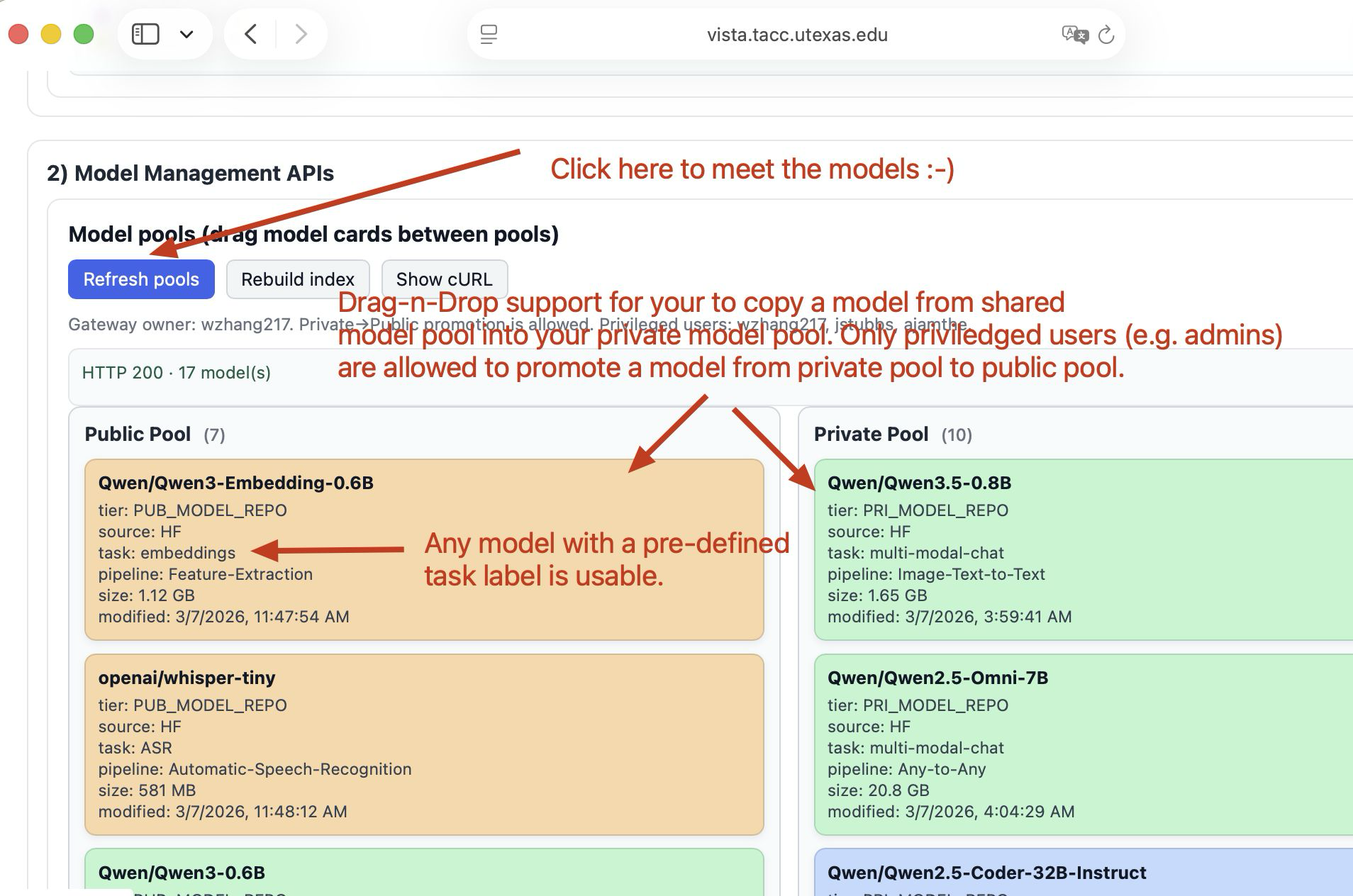

Step 4.2.3: Explore FlexServ Model Manager

The visual model manager provides an intuitive interface for managing your models on the FlexServ server. You can view the list of available models, check their status, and perform actions such as downloading new models, copying a model from public pool to your private pool, and unpack any downloaded model archive.

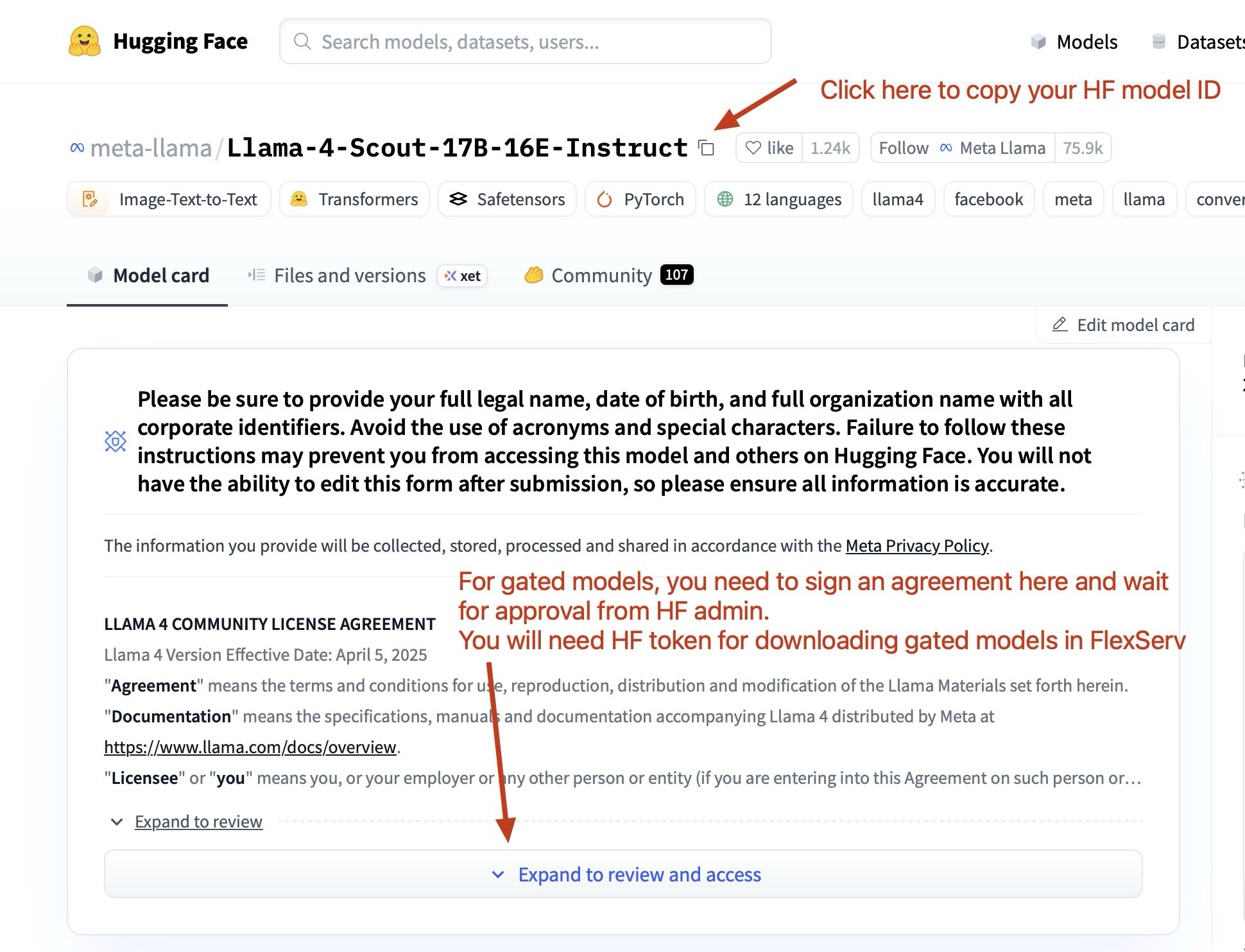

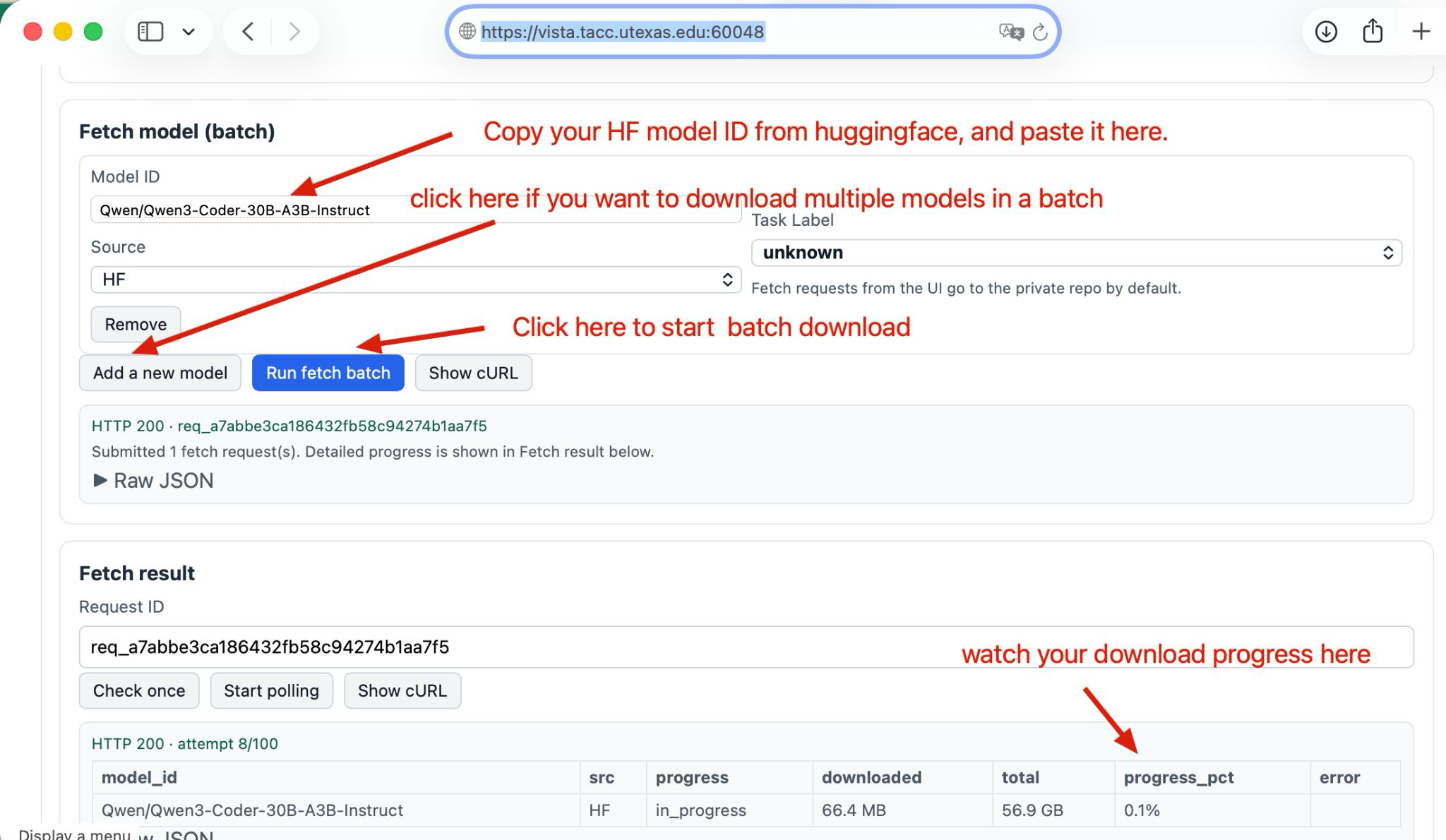

Downloading Models from Hugging Face to FlexServ

To download a model or multiple models from HuggingFace, you need to go to HuggingFace website first, click on models, search for your model of interest, and then click on the model to go to the model page. On the model page, you can find the model name under the model title, and you can use that name to download the model to your FlexServ server.

For example, if you want to download the Qwen3.5-0.8B model, you can search for “Qwen” on HuggingFace, find the Qwen3.5-0.8B model, click on it to go to the model page, and then copy “Qwen/Qwen3.5-0.8B” as the model name to download it to your FlexServ server. Note that on the FlexServ UI, you can add more than one model names in the download section, and you can fetch them all in parallel by clicking the Run fetch batch button. You will see the downloading status in the UI and once the model is downloaded, you can use it for inference right away.

Unpacking your own model to FlexServ

We also support unpack archived models (e.g. tar.gz, zip) directly to the model repository of FlexServ, and this unpack button will unpack the archive for you. However, for self-owned models, we require you to build a model index file to be included in the archive. This is an advanced feature, and we will not cover the details but we will provide a guidance later on our website.

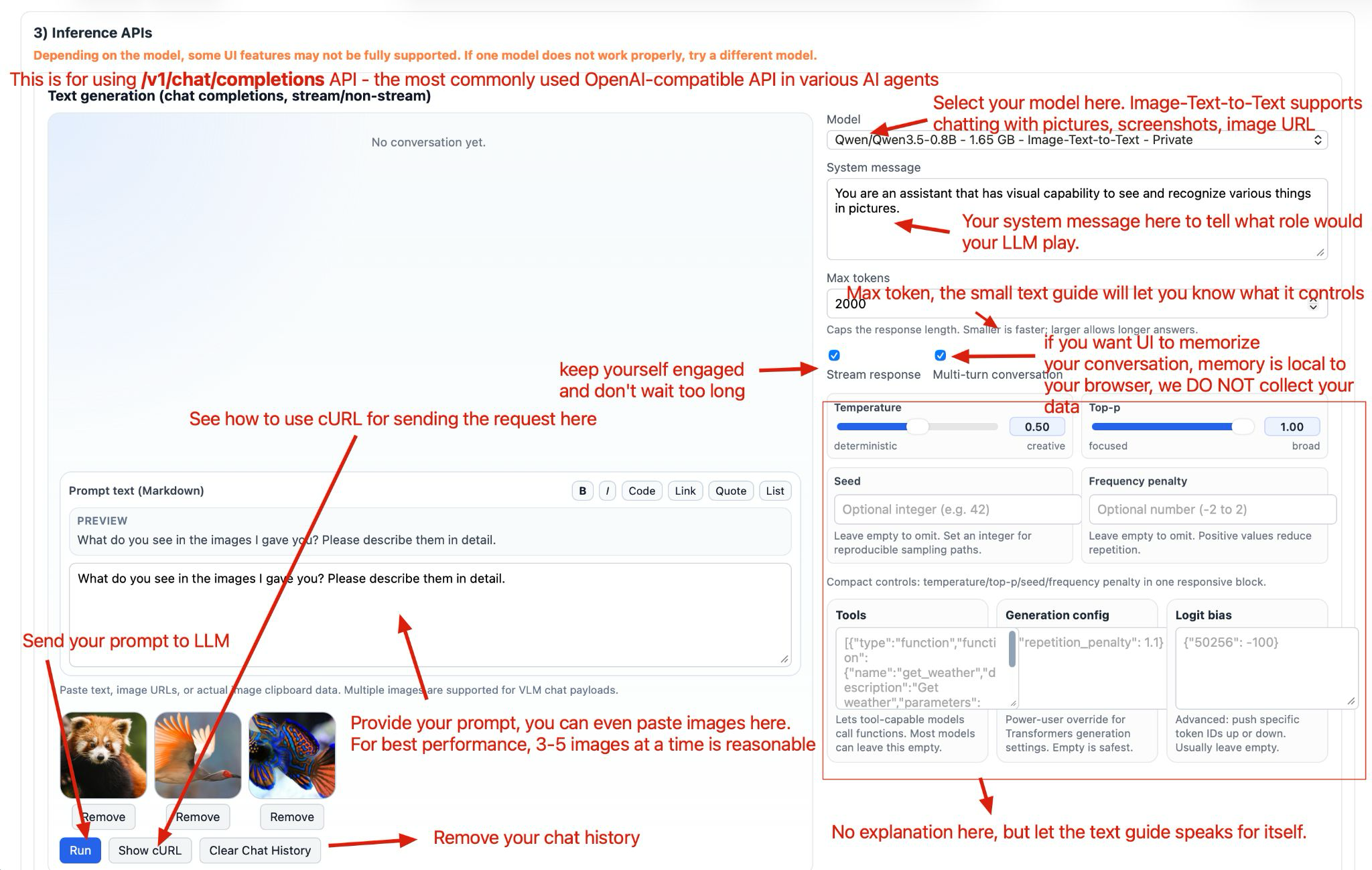

Step 4.2.4: Multi-model Chat with FlexServ

The multi-modal chat feature in FlexServ UI is based on the use of /v1/chat/completions API in FlexServ, which is widely used in most of the agentic software today. Our UI feature allows you to have a conversation with the model while also sending images as part of the conversation. This is particularly useful for scenarios where you want to ask questions about images or have a discussion that involves visual context. You can upload an image, and the model will be able to see the image and provide responses based on both the text and the visual information. Note that you have to select Image-text-to-text models for multi-modal chat. But you can also use the Text-to-text models for plain-text based chat or conversation, such as code generation or question answering without sending any images.

For sending images in the chat, you can click in our Markdown editor, and simply paste either the URI or a screenshot from your clipboard, and the image will be shown around the editor. Press Run you will start chatting with your selected model. Also, if you want FlexServ UI to memorize your conversation history, you can check the multi-turn conversation checkbox, and the UI will memorize your chat history in your local storage that your browser provides. This is totally local to your browser and hence is with decent privacy, and we DO NOT collect any of your data. We provide button to clear the conversation history and you can also clear your web-browser data to clear everything.

Also note that we provide intuitive UI controls for your to easily tune some of the most important parameters for your chat, such as temperature, top_p, and max_tokens. You can adjust those parameters to see how the model response changes accordingly.

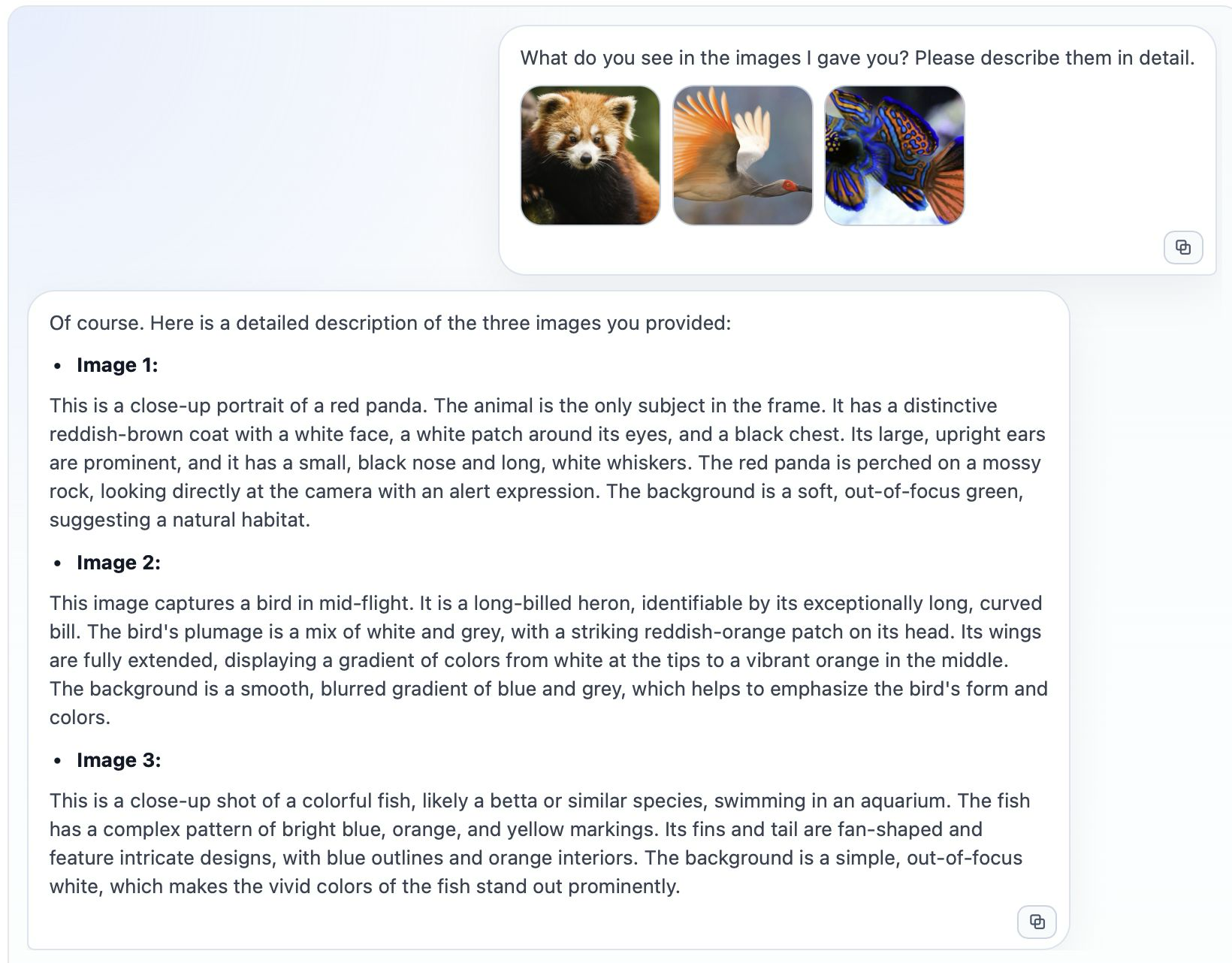

If everything goes well, you should be able to see the response from the model in the chat window, and the model should be able to understand the image you sent and provide a relevant response based on both the text and the image. You can continue the conversation by sending more text or images, and the model will keep track of the context to provide coherent responses.

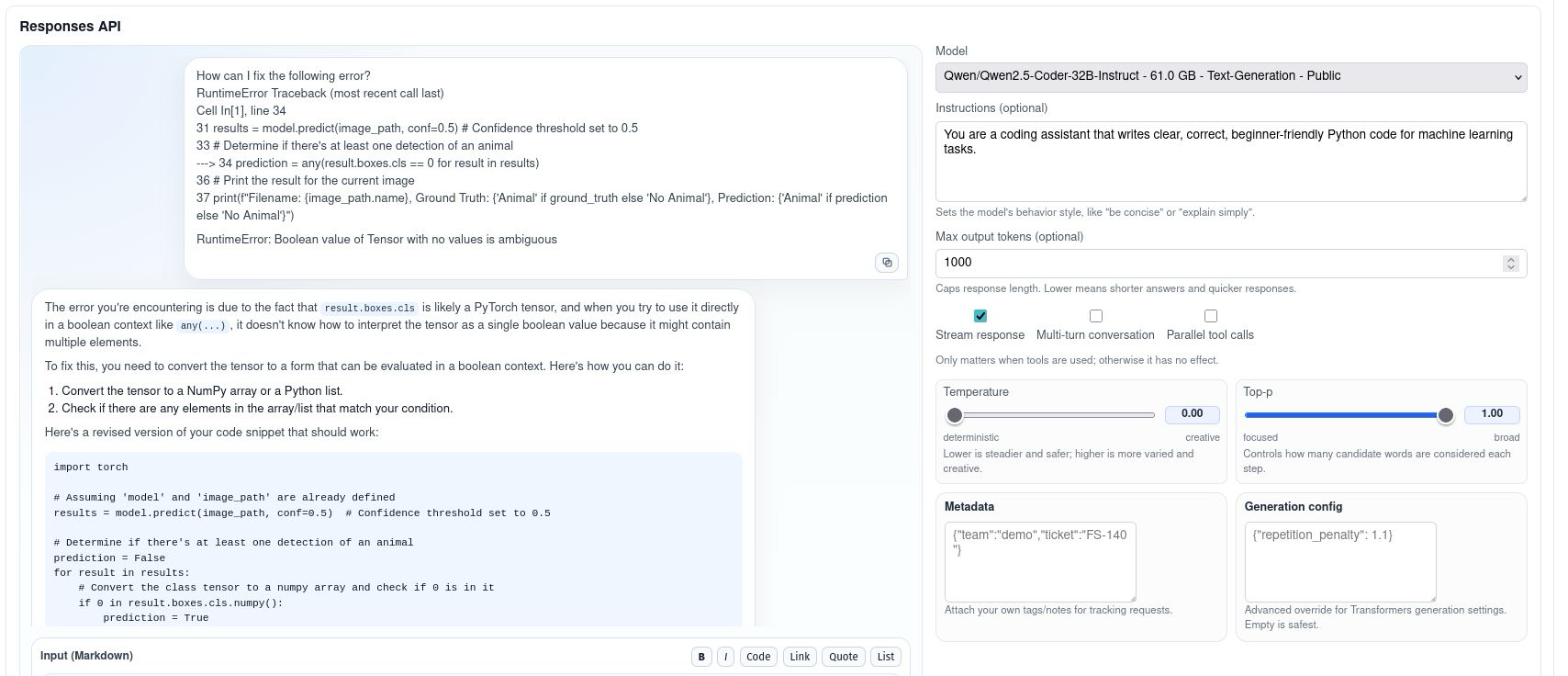

Step 4.2.5: Text Generation with Responses API in FlexServ

This feature is based on the use of /v1/responses API in FlexServ, which is an OpenAI-compatible API endpoint for generating responses from the model. Again, our UI provide your a markdown editor to input your prompt, and you can perform the text generation by clicking the Run button. You can also adjust the parameters such as temperature, top_p, and max_tokens to see how the model response changes accordingly. The generated response will be shown in the response window, and you can continue to have a conversation with the model by sending more prompts.

Note that we currently only support text-based generation with the /v1/responses API, and the multi-modal chat feature is based on the /v1/chat/completions API, so if you want to have multi-modal conversation with images, you will need to use the chat interface instead of the response interface. But this response interface will be playing a critical role for another of our demo in the afternoon, which is to use FlexServ for code generation and get a real image recognition program generated for you to run on Vista, so stay tuned for that!!

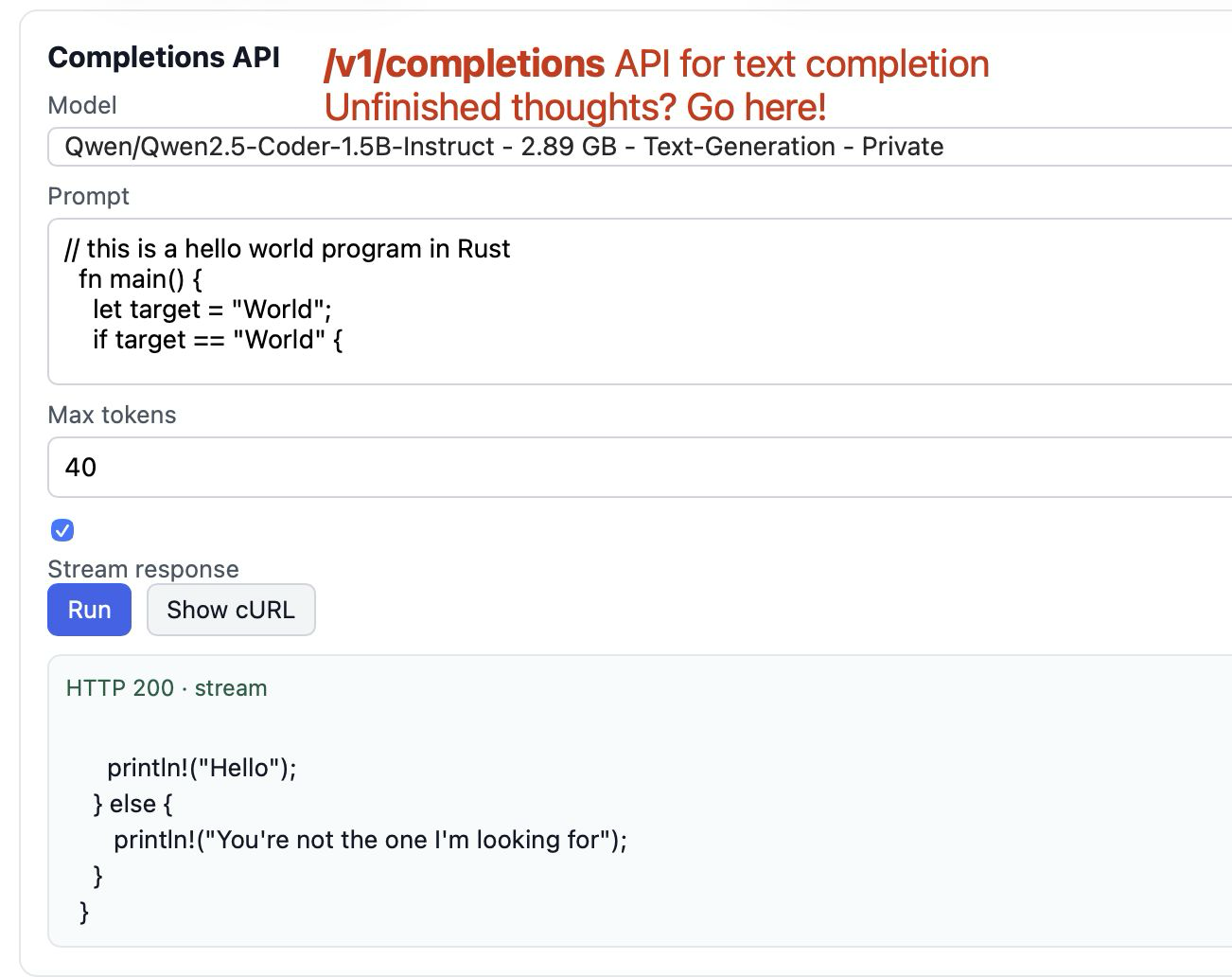

Step 4.2.6: Text Completion with Completions API in FlexServ

Text completion is another important feature in FlexServ, and it is based on the use of /v1/completions API in FlexServ. This is a much simpler feature right now but if you have any unfinished thoughts or sentences, you can use this feature to let the model help you complete the text. You can input your incomplete text in the editor, click Run, and the model will generate the completed text for you.

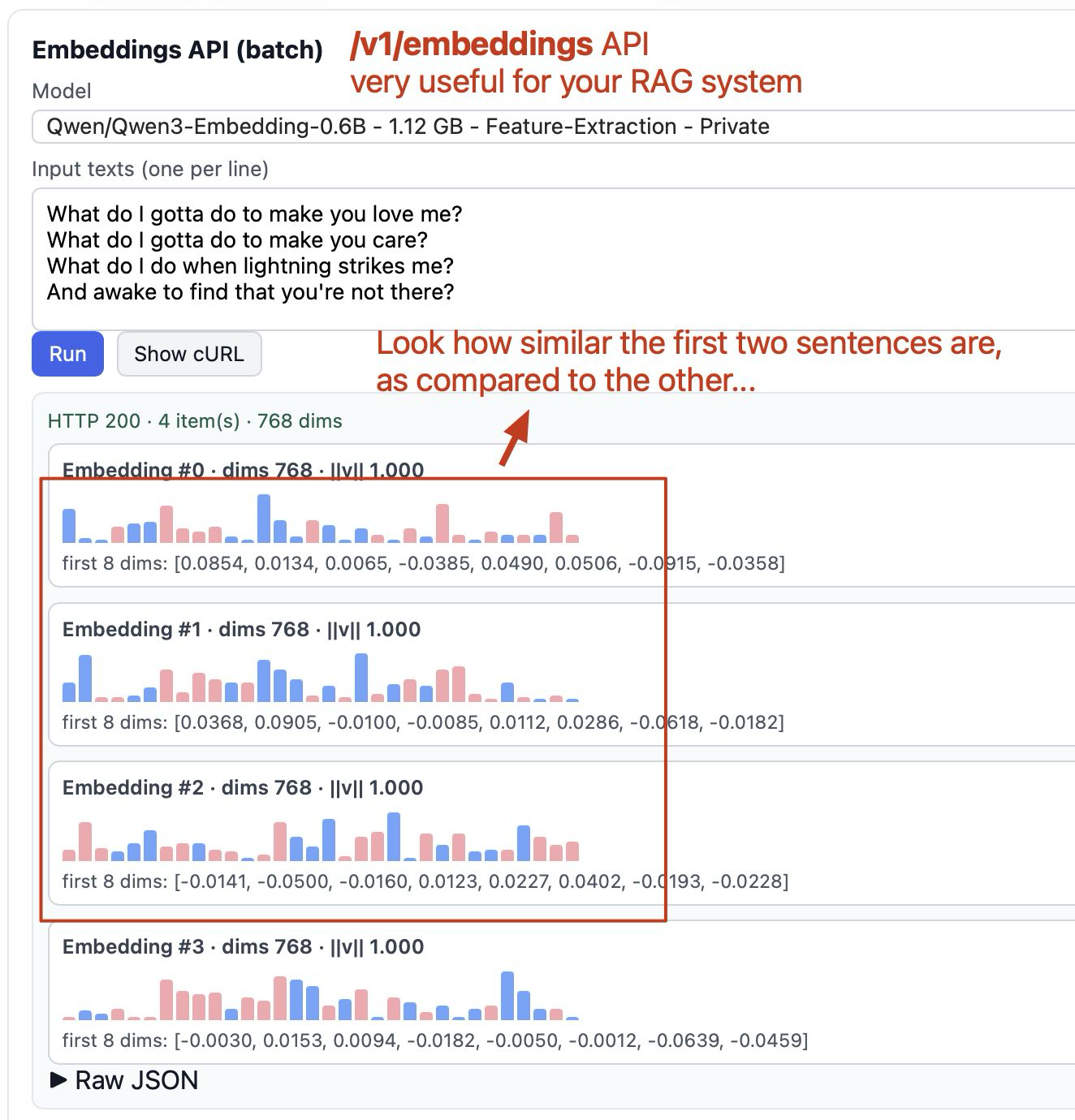

Step 4.2.7: Generating Embeddings with FlexServ

Embedding generation is essential for many AI applications, such as semantic search, clustering, and recommendation systems. With FlexServ, you can easily generate embeddings for your text data using the /v1/embeddings API. On FlexServ UI, you can put the sentences you wish to generate embeddings for, one on each line. By clicking Run, you will get the embeddings by clicking on Raw JSON and you can visually view the embeddings with our embedding visualization on the page.

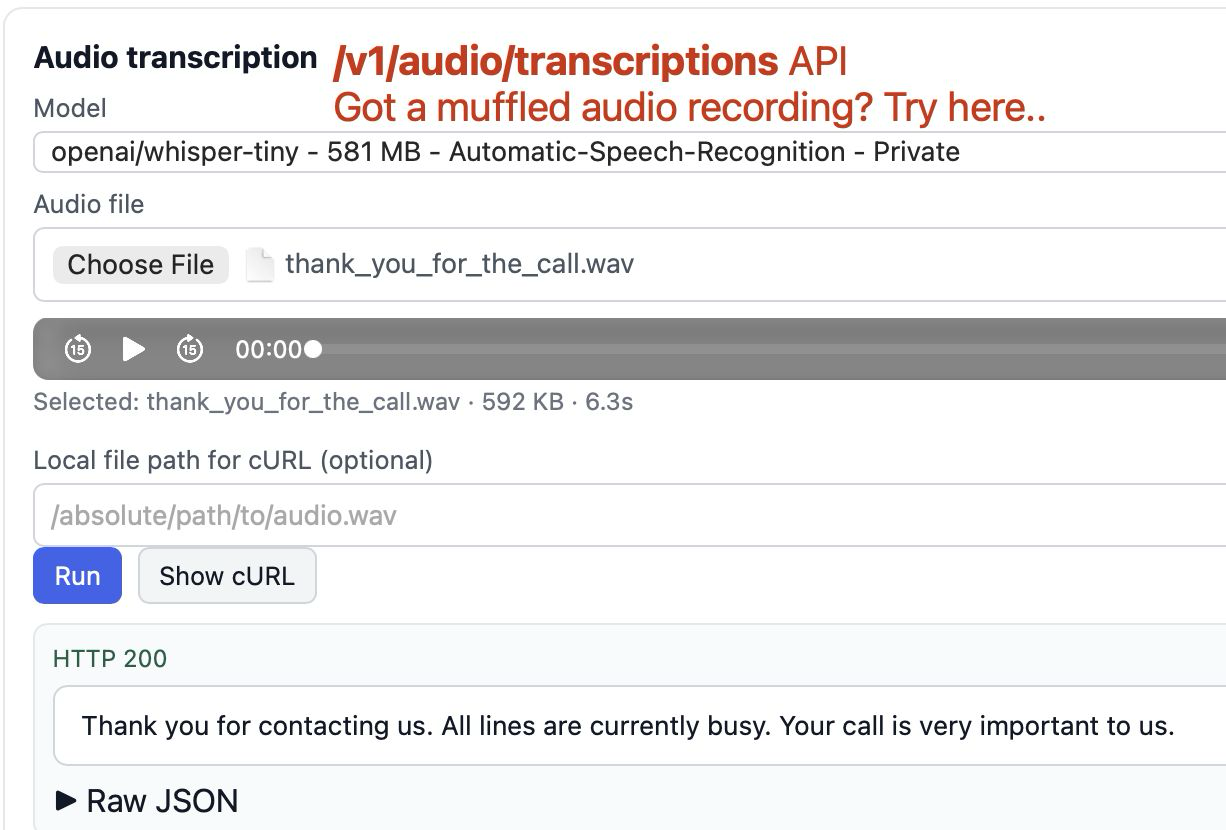

Step 4.2.8: Audio Transcription with FlexServ

Audio transcription is yet another exciting feature in FlexServ, which allows you to transcribe your audio files into text using the power of ASR models. With FlexServ, you can easily upload your audio files and get the transcriptions in a matter of seconds. This is particularly useful for scenarios such as meeting transcription, podcast transcription, and any other situation where you have audio data that you want to convert into text for easier analysis and reference. You can simply upload your audio file in the UI, click Run, and you will get the transcription result in the response window. You can also play your audio file in the UI to confirm that the transcription result matches with your audio content.

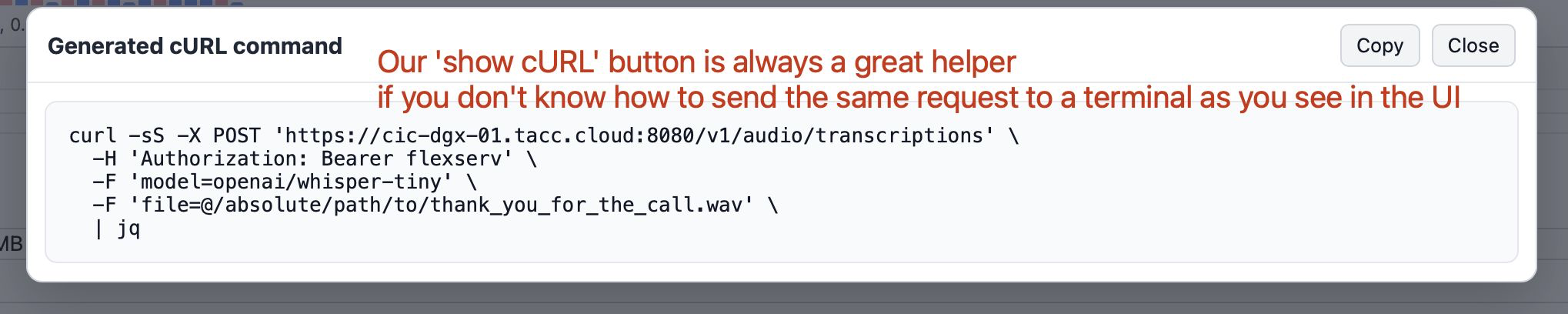

Step 4.2.9: Getting cURL Command for the same request in FlexServ UI

Across different sections on the UI, you will see Show cURL button, which will show you the cURL command for the request you are making on the UI. This is particularly useful for users who want to use their own custom scripts to interact with FlexServ server, and they can simply copy the cURL command and modify it in their scripts to send requests to the FlexServ server without having to go through the UI. This also makes it easier for users to integrate FlexServ into their existing workflows and applications by providing them with a straightforward way to interact with the server programmatically.

Upcoming Next: From Prompt to Program - Build an Animal Detection App with FlexServ

Please come back to our code generation session in the afternoon to see how you can use FlexServ to do some real work - we will show you how to use FlexServ to generate image recognition program for detecting small animals and run the program on Vista with TAPIS Job!